Motivation for OO

The Quality of Classes and OO Design

table of contents

OO didn’t come out of the blue…

OO has strong historical roots in other paradigms and practices. It came about to address problems commonly grouped together as the “software crisis”.

Applied improperly, or by people without the skills, knowledge, and experience, it doesn’t solve any problems, and might even make things worse. It can be an important piece of the solution, but isn’t a guarantee or a silver bullet.

Complexity

Software is inherently complex because

- we attempt to solve problems in complex domains

- we are forced by the size of the problem to work in teams

- software is incredibly maleable building material

- discrete systems are prone to unpredictable behavior

- software systems consist of many pieces, many of which communicate

This complexity has led to many problems with large software projects. The term “software crisis” was first used at a Nato conference in 1968. Can we call something that old a crisis? The “software crisis” manifests itself in

- cost overruns

- user dissatisfaction with the final product

- buggy software

- brittle software

Some factors that impact on and reflect complexity in software:

- The number of names (variables, functions, etc) that are visible

- Constraints on the time-sequence of operations (real-time constraints)

- Memory management (garbage collection and address spaces)

- Concurrency

- Event driven user interfaces

How do humans cope with complexity in everyday life?

Abstraction

Humans deal with complexity by abstracting details away. For example:

- Driving a car doesn’t require knowledge of internal combustion engine; sufficient to think of a car as simple transport.

- Stereotypes are negative examples of abstraction.

To be useful, an abstraction (model) must be smaller than what it represents. (road map vs photographs of terrain vs physical model).

Exercise 1

Memorize as many numbers from the following sequence as you can. I’ll show them for 30 seconds. Now write them down.

1759376099873462875933451089427849701120765934

- How many did you remember?

- How many could you remember with unlimited amounts of time?

Exercise 2

How many of these concepts can you memorize in 30 seconds?

Miller (Psychological Review, vol 63(2)) “The Magical Number Seven, Plus or Minus Two: Some Limits on Our Capacity for Processing Information”

Exercise 3

Write down as many of the following telephone numbers as you can…

- Home:

- Office:

- Boss:

- Co-worker:

- Parents:

- Spouse’s office:

- Fax:

- Friend1:

- Friend2:

- Local Pizza:

By abstracting the details of the numbers away and grouping them into a new concept (telephone number) we have increased our information handling capacity by nearly an order of magnitude.

Working with abstractions lets us handle more information, but we’re still limited by Miller’s observation. What if you have more than 7 things to juggle in your head simultaneously?

Hierarchy

A common strategy is to form a hierarchy to classify and order our abstractions. Examples: military, large companies, Linaen classification system.

Decomposition

Divide and conquer is a handy skill for many thorny life problems. One reason we want to compose a system from small pieces, rather than build a large monolithic system, because the former can be made more reliable. Failure in one part, if properly designed, won’t cause failure of the whole. This depends on the issue of coupling.

Tightly coupled systems of many parts are actually less reliable. If each part has a probability of being implemented correctly of p, and there are N parts, then the chance of of the system working is pN. When N is large, then only if p is very close to 1 will the system operate.

We can beat this grim view of a system composed of many parts by properly decomposing and decoupling.

Another reason is that we can divide up the work more easily.

Postpone obligation

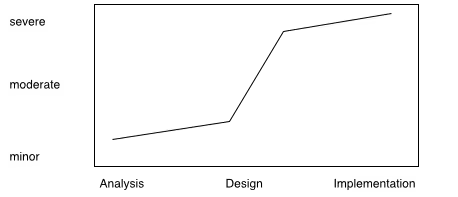

Delaying decisions that bind you in the future for as long as possible preserves flexibility. Deciding too quickly makes change more likely. The cost of change depends on when it occurs. Consider the traditional waterfall development model, and the cost of change during the lifecycle of a project.

OO Technology

Abstractions

Nothing unique about forming abstractions, but in OO this is a main focus of activity and organization.

We can take advantage of the natural human tendency to anthropomorphise.

We’ll call our abstractions objects.

Hierarchy

We’ll put our abstractions into a hierarchy to keep them organized and minimize redundancy.

This can be overrated in OO.

Decomposition

Whether we do our decomposition from a procedural, or algorithmic, point of view or from an OO point of view, the idea is the same: divide and conquer, avoid thinking of too much at once, use a hierarchy to focus our efforts.

In algorithmic decomp, we think in terms of breaking the process down into progressively finer steps. The steps, or the algorithm are the focus.

The problem with an algorithmic or top-down design, is that if we make the wrong top-level decisions, we end up having to do all sorts of ugly things down at the leaves of the decomposition tree to get the system to work. The killer is that it is hard to judge or test what are good decompositions at the topmost level when we know the least about the problem.

In OO decomp, we think in terms of identifying active, autonomous agents which are responsible for doing the work, and which communicate with each other to get the overall problem solved.

Advantages of OO decomposition

- smaller systems through re-use of existing components

- evolution from smaller, working models is inherent

- natural way to “divide and conquer” the large state spaces we face

Postponing commitment

In OO, our implementation decisions are easier to postpone since they aren’t visible.

Analysis and Design: more work up-front pays off in the long run.

- Design for genericity – danger of taking too far.

- Problem of knowing enough of the requirements, tendency to change.

Revolution vs. Evolution

Object-Oriented technology is both an evolution and a revolution. As evolution it is the logical descendant of HLL, procedures, libraries, structured programming, and abstract data types. The revolution of OO technology is on a personal basis. People who are proficient in a structured programming world have learned to think in terms of algorithmic decomposition. This was hard to learn and is even harder to unlearn.

Computing as simulation

The primary difference between OT and structured HLLs is the fidelity of the abstraction to the real world. Fortran forces you into working with abstractions that are computer-language oriented (real, array, do/while). OT lets you describe your problem in terms of the problem space, in other words, OT is a modeling and simulation tool, whereas traditional HLL are simply descriptiors of algorithms.

Where does C sit in this regard? Structures but no behavior.

The origins of OO programming are found in languages built for simulation.

Why OO technology now?

30 years old, why only now ubiquitous? More pressure on business to compete (globalization, need for greater productivity, flexibility, innovation, decentralization, empowered users)]

Best current means of dealing with complexity (there will be something else someday).

Working complex systems

Booch identifies five common characteristics to all complex systems:

- There is some hierarchy to the system.

- A minute ago we viewed hierarchy as something we did to cope with complexity. This view says that a complex system is always hierarchic. Perhaps people can only see complex systems in this way.

- The primitive components of a system depend on your point of view.

- One man’s primitive is another's complexity.

- Components are more tightly coupled internally than they are externally.

- The components form clusters independent of other clusters.

- There are common patterns of simple components which give rise to complex behavior.

- The complexity arises from the interaction or composition of components, not from the complexity of the components themselves.

- Complex systems which work evolved from simple systems which worked.

- Or stated more strongly: complex systems built from scratch don’t work, and can not be made to work.